How SpaceX does Software Engineering

A 2025 Deep Dive

Introduction

SpaceX has transformed from a scrappy startup to a dominant force in aerospace, not by following the industry playbook, but by rewriting it, often literally. In 2020, the company made headlines by safely delivering NASA astronauts to the ISS aboard Crew Dragon, ending nearly a decade of U.S. reliance on foreign spacecraft. But that milestone was only the beginning. As of 2025, SpaceX is regularly launching astronauts, deploying thousands of satellites for global internet, and testing Starship, a fully reusable, super heavy-lift vehicle aimed at deep space missions.

Beneath the roar of engines and booster landings lies SpaceX’s real edge: its software. The company’s aggressive launch cadence, ability to iterate rapidly, and unmatched vehicle reuse are all underpinned by lean, sophisticated software systems. Whether it’s Starlink satellites receiving over-the-air updates in orbit, Crew Dragon executing fully autonomous docking maneuvers, or Starship’s booster being caught mid-air by robotic launch tower arms, software is central to SpaceX’s operations.

Starship, in particular, embodies the convergence of hardware and software innovation. From its first full-stack test in 2023 to precision tower landings in 2024, the vehicle’s evolution has been shaped by a rapid cycle of flight data, simulation, and code refinement. Similarly, the Starlink constellation has scaled to over 6,700 satellites, managed by cloud-native control systems with AI-enhanced telemetry analysis and canary deployments. Meanwhile, Crew Dragon continues to fly safely, supported by Class A-certified flight software built with fault-tolerant autonomy in mind.

In 2020, I wrote an article titled “How SpaceX Develops and Tests Software” that explored the engineering marvel behind one of the world’s most ambitious space companies. This updated article revisits how SpaceX builds and operates its software, diving deep into development practices, testing infrastructure, DevOps culture, and system design. It’s a story of high reliability at high velocity, of automation over bureaucracy, and of engineers wielding CI pipelines and simulation frameworks as confidently as astronauts strap into rockets. If you’re a developer, SpaceX’s approach isn’t just inspirational, it’s actionable. Let’s explore how they do it.

Lean Software Engineering Practices at SpaceX

One of SpaceX’s defining strengths is its lean, vertically-integrated software team and a culture that blurs the lines between development and operations. Unlike traditional aerospace programs that might have separate teams for development, verification, and quality assurance, SpaceX’s approach is holistic: “we don’t separate QA from development – every engineer writing software is also expected to contribute to its testing”. This DevOps ethos means that the same people who write code also write tests, run simulations, and quickly iterate on fixes. The company’s software organization is strikingly small given its output. Around 2019, SpaceX had about 50 software developers responsible for all flight software across its fleet of rockets and spacecraft, whereas a comparable government project might employ 2,500 people for the same scope. Even accounting for growth in the Starlink era, SpaceX’s team size is orders of magnitude leaner than industry norms. How can such a small team manage the software for Falcon 9, Falcon Heavy, Dragon, Starlink satellites, and now Starship? The answer lies in focus, smart reuse, and aggressive automation.

Reuse and Modular Design: From early on, SpaceX emphasized modularity in both hardware and software design. For example, the Falcon rocket family is essentially a scalable cluster of a common engine module – one Merlin engine on Falcon 1, nine on Falcon 9, and 27 on Falcon Heavy. This hardware modularity simplifies software development: the same control software can run on a single-engine test stand or a nine-engine booster, with the difference mostly in configuration. SpaceX builds its flight software as a set of components with clear boundaries and interfaces, each handling a specific subsystem (engines, navigation, communications, etc.). By partitioning the code into smaller modules, engineers can fully test each in isolation before integrating them into the whole system. This modular approach also supports parallel development – different teams (or individuals) can work on separate pieces without stepping on each other, then combine and verify them in simulation. Crucially, common code is reused across vehicles: lessons learned and libraries written for Falcon 9 and Dragon were leveraged for Starship’s software. Elon Musk has noted that Starship’s early flights used a lot of “Falcon code” initially, allowing the team to get off the ground faster. Over time those modules are optimized for Starship’s unique needs (like controlling 33 Raptors and active flaps), but the reuse jump-starts development and ensures a baseline of maturity. In software engineering terms, SpaceX treats their rockets as different products built on a shared platform or codebase. This is a stark contrast to traditional aerospace where new programs often start from scratch or have heavy, unique codebases.

Agile, Fail-Fast Culture: SpaceX’s software process mirrors its hardware approach, iterative and agile. The company is not afraid to push changes quickly and even allow failures in the test phase, as long as they learn and improve. “SpaceX embraced failure… each setback served as a valuable learning opportunity”, notes one analysis. Internally, they break down large projects into smaller, quickly deliverable components, demonstrating something working end-to-end early. New features or fixes are developed on short cycles: an engineer might pick up a ticket, implement and test it in days, and merge it once it passes rigorous checks. The concept of a “responsible engineer” is used – one person owns a change from design through implementation and verification. This accountability ensures issues are thoroughly understood by someone and followed to closure. Yet, collaboration is key: code reviews are mandatory, and multiple engineers often contribute to critical code paths for redundancy in thought. When a change is ready, it’s integrated and can be scheduled for the next flight or deployed to operational systems like Starlink very quickly. This rapid deployment is possible only because of extremely high confidence in their testing (as we’ll see below). It’s common at SpaceX for software to be updated between back-to-back launches; for instance, if telemetry from today’s flight indicates a need to tweak an engine control parameter, a patch might be validated in simulation and applied to the very next mission. SpaceX’s ability to “fly software changes” so frequently, rather than waiting for multi-year update cycles, gives it a huge agility advantage.

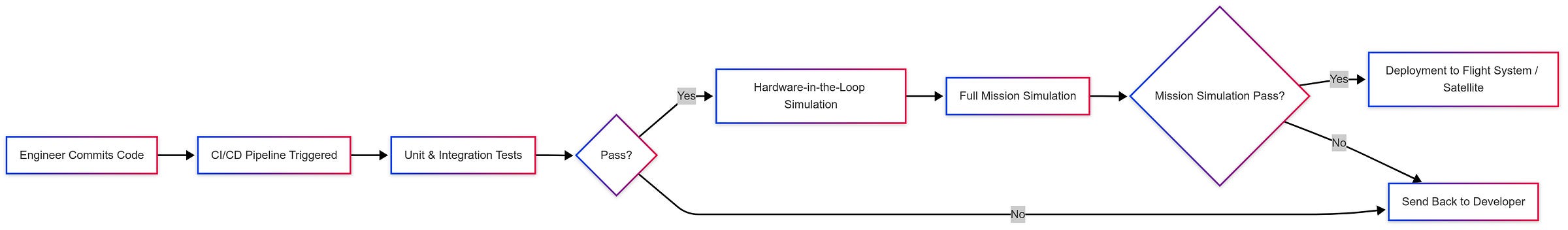

Continuous Delivery & DevOps Tooling: SpaceX has invested heavily in internal tooling to support its fast pace. According to the former U.S. Air Force Chief Software Officer who visited SpaceX, they have a dedicated team that approves new software tools within 24 hours and a testing team that can fully emulate a rocket in software before launch. The result is astounding: SpaceX can push new software into production up to 17,000 times a day with confidence. In practice this refers to their automated test and deployment pipeline – every code commit triggers a battery of tests, and the system can integrate changes at an almost continuous rhythm. SpaceX’s own engineers have described a custom continuous integration (CI) system that handles this workload: it runs on a farm of roughly 550 servers (ranging from 2-core to 28-core machines) to execute builds and simulations in parallel. Remarkably, they run around a million CI build/test jobs per month, covering everything from unit tests to full mission simulations. The CI system is backed by tools like HTCondor (a workload scheduler) for queuing jobs and managing compute resources. By using technologies like Docker containers, each test runs in a clean, reproducible environment, eliminating “it works on my machine” issues. The CI pipeline is deeply integrated with both software and hardware – it doesn’t just build code, it also triggers tests on actual hardware rigs as needed.

Continuous Testing and Simulation Infrastructure

To ensure reliability in such rapid development, SpaceX leans on an extensive test infrastructure, arguably one of the most advanced in the industry. The goal is to know exactly how the software will behave in all scenarios before it ever flies. Key elements of their testing approach include:

Hardware-in-the-Loop (HITL) Testbeds: SpaceX maintains one-off test stands that contain the real flight computers and electronics (all the “guts” of the rocket) laid out in a lab, connected to sensors and actuators, but without the hazardous engines or propellant. They jokingly call these setups “HITLs” (pronounced “hittles”). For example, they have a Dragon 2 testbed with actual seat actuators and life support hardware, and Falcon/Starship testbeds with avionics and telemetry systems. The flight software is loaded onto these real flight computers and then exercises simulated missions. Because it’s the actual hardware, the software thinks it’s flying a rocket, when in reality it’s all happening on a lab bench. By tying these into the CI system, SpaceX can automatically run regression tests that involve real hardware on every code change or on schedule. This is incredibly effective at catching integration issues, timing problems, and hardware-software interface bugs that pure software simulation might miss. It’s test automation taken to the extreme, where a commit might trigger, for instance, a full-duration simulated launch on the HITL rig at 2 AM, with engineers arriving in the morning to a detailed report of any anomalies.

Full-Stack Software Simulation: Alongside HITL, SpaceX runs high-fidelity software simulations (often called “hardware-out-of-the-loop” tests) for entire mission profiles. These simulations incorporate physics, orbital mechanics, engine thrust curves, sensor noise, etc., to mimic how the vehicle would fly. One SpaceX engineer noted they can simulate something like “flying Dragon from liftoff to docking” inside the CI pipeline. Similarly, for Starlink satellites, they simulate a satellite pass over a ground station: they have the satellite’s software talk to a simulated ground antenna and even model relative motion. In one example, to test Starlink’s networking, they fix a virtual ground station antenna and simulate the satellite moving overhead, overriding the software’s perception so it “thinks” it’s in orbit. This lets them verify the full RF link and data flow. If certain aspects (like actual antenna pointing) can’t be tested in that setup, they complement it with pure software tests for those. The guiding principle is to break down every mission phase and make sure each is exercised under test – either in simulation, on real hardware, or both. SpaceX’s continuous testing philosophy uses a mix of unit tests, integration tests, hardware tests, and end-to-end mission rehearsals to cover “all the important aspects of the system”.

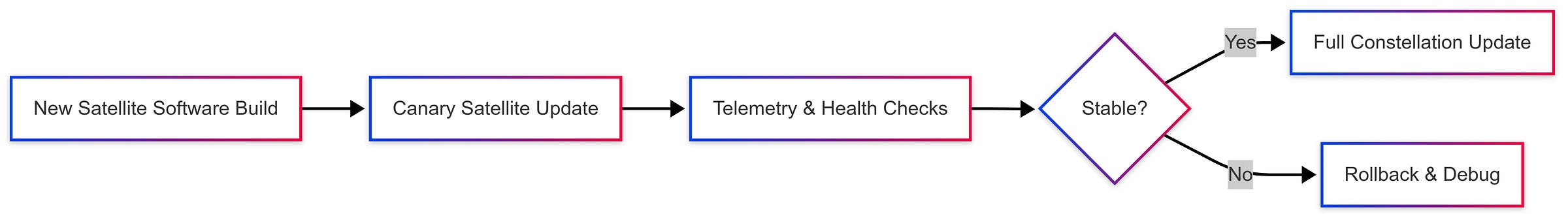

Automated Regression and Canary Testing: Whenever new code is written, it’s subjected to a gauntlet of automated regression tests. SpaceX has a team dedicated to maintaining these CI/CD tools and simulation infrastructure. This includes managing the massive compute load and ensuring tests remain stable (flaky tests are the enemy of fast-moving development). They employ techniques like change-based testing, running targeted test sets based on what code was modified, to optimize pipeline time. The use of analytics is also key: SpaceX gathers metrics from test runs and has web dashboards showing performance graphs, pass/fail trends, and resource usage. This data-driven approach allows them to continually tune the process (for instance, automatically skipping redundant tests or focusing on high-risk areas). The company has also explored AI/ML for test optimization, using machine learning to analyze test results and suggest minimal test sets or to detect subtle anomalies in telemetry that might indicate a potential bug. While Elon Musk has commented that SpaceX “uses basically no AI” in its core rocket operations (preferring deterministic algorithms for guidance and control), behind the scenes they are not shy about using advanced software techniques to improve development efficiency. Additionally, as mentioned earlier, the Starlink constellation’s canary deployment strategy is a novel form of testing: after passing all ground tests, new satellite code is deployed to one or a few satellites in orbit and observed in real-world conditions. If any issue arises, it’s isolated to a small subset and can be fixed before wide rollout. This practice, more common in software services than spacecraft, dramatically reduces risk for the 4,000+ active Starlinks.

Tripwire and Fault Injection Testing: SpaceX tests not only nominal scenarios but also failure modes. Engineers will inject faults into simulations – like killing one of the flight computers mid-run – to verify the system fails gracefully. They test network dropouts, sensor failures, and engine-out scenarios to ensure the software’s fault detection and isolation logic works. This paid off in real life: on a 2020 Falcon 9 Starlink mission, one of the nine Merlin engines shut down early during ascent, but the onboard software simply adjusted the throttle and burn time of the others to compensate, delivering all satellites to the correct orbit. The mission succeeded despite the engine failure, a testament to designing and testing for redundancy. (As Elon Musk quipped, “Shows value of having 9 engines!”) Falcon 9 is designed to handle engine-out and even multiple engine failures in some cases, and those contingencies are rigorously simulated pre-flight. This philosophy extends to all systems: assume things will go wrong, and code the software to handle it. SpaceX’s software team approaches this by “writing defensive logic, and checking the status of each operation” – if something expected doesn’t occur, the software has predefined recovery paths or safe-states. For example, if a valve fails to open when commanded, the code might retry or switch to a backup system, and at minimum not allow the mission to proceed in an unsafe condition. By the time a rocket counts down to T-0, SpaceX’s software has already practiced that launch hundreds or thousands of times under every imaginable condition. This relentless testing gives both the team and their customers (like NASA or the Air Force) confidence that new software changes won’t introduce unforeseen bugs.

One illustrative comparison came from Boeing’s Starliner program (a more traditional aerospace effort). In 2019, Starliner’s first uncrewed test flight failed due to software issues, one bug with a mis-set mission timer prevented orbital insertion, and another nearly caused a catastrophic spacecraft collision during reentry separation. An investigation found that Boeing had not run a full, end-to-end integrated software simulation; they tested pieces in isolation and used some emulated components, missing the interaction issues. Boeing’s program manager admitted that more thorough, system-level testing on the ground would have caught these errors. SpaceX, by contrast, essentially does an end-to-end mission test for every software change, using real hardware where possible. This difference in testing rigor – enabled by automation, highlights why SpaceX’s fast-moving approach can still maintain reliability. It’s a DevOps mantra in action: automate everything, test continuously, and practice like you fly.

Reliability Through Redundancy: Triplex and Fault-Tolerance

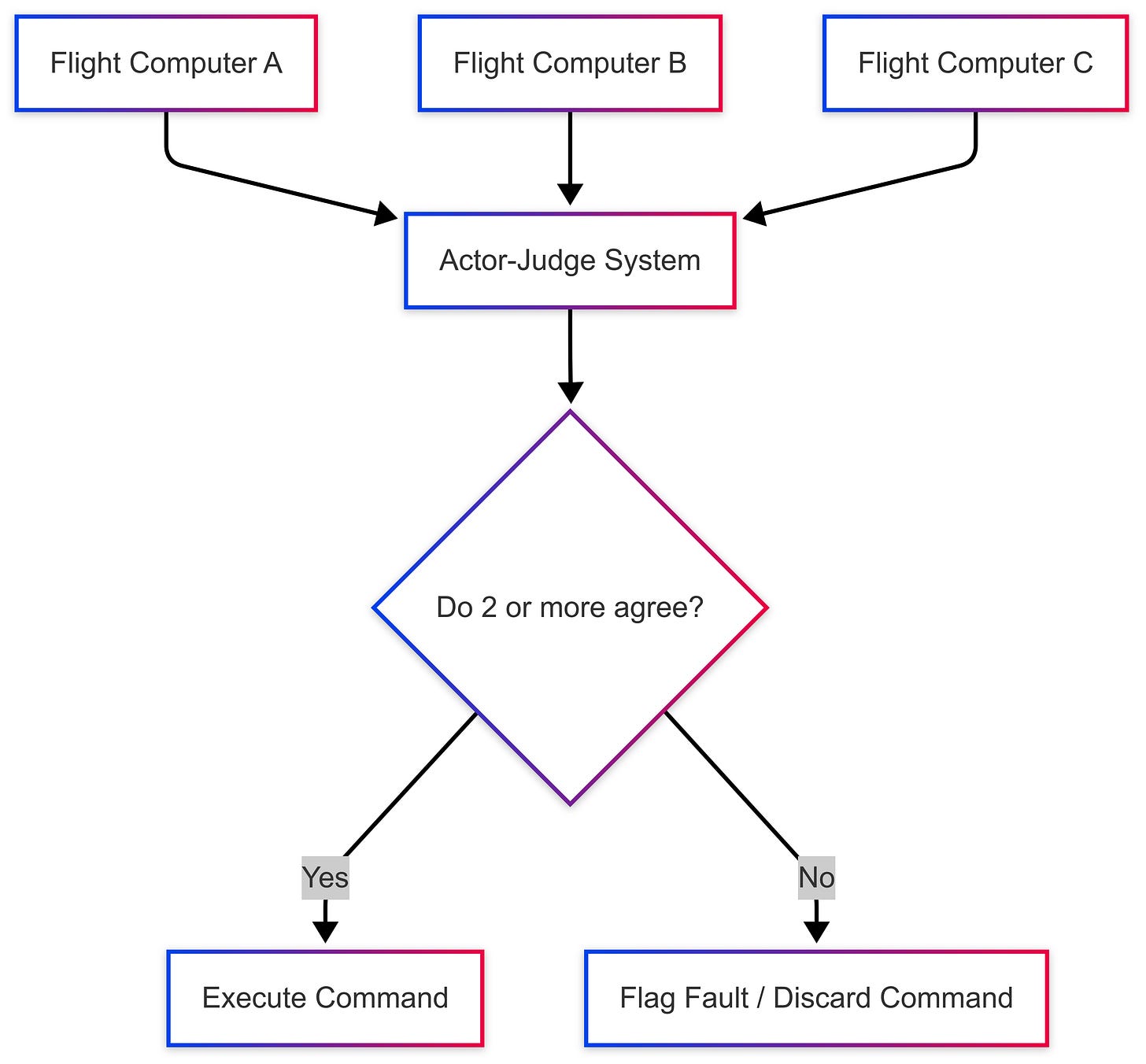

Launching rockets and operating spacecraft is inherently risky, so SpaceX’s software is engineered with robust fault-tolerance and redundancy. A cornerstone of this is SpaceX’s use of triplex redundancy in flight control computers. The Falcon 9 and Falcon Heavy utilize a trio of independent flight computers running in parallel, often referred to as “flight strings”. Each flight computer string has dual-core x86 processors (running Linux on each core) and runs an identical copy of the flight software written in C/C++. On any given control cycle (which might be, say, 50 times per second), all three computers take in sensor data and independently compute the next commands (engine throttles, guidance adjustments, etc.). A voting logic called an Actor-Judge system then compares the results: if all three agree, the command is sent to the actuators; if one disagrees (produces a divergent result), that “bad” string is outvoted by the other two. In fact, if one computer misbehaves (due to a radiation bit-flip or hardware fault), the system can automatically drop it and continue operating on the remaining two. The Falcon 9 can complete its mission even if it’s down to a single string, although that situation is extremely unlikely.

This architecture gives Falcon 9 tolerance to processor errors without needing each processor to be an expensive radiation-hardened unit. SpaceX opted to use relatively standard processors (x86) and rely on triple redundancy for reliability, since the atmospheric flight time is short enough that cosmic radiation exposure is minimal. (For longer-duration on-orbit systems, radiation-hardened CPUs are typically used, but for launch vehicles SpaceX found a clever balance with redundancy.) The software is designed such that any single-string failure is immediately detected and isolated. This approach significantly reduces the probability of a total failure due to a computer glitch.

The triplex system is just one layer; beyond that, there are numerous redundant sensors and actuators. For example, Falcon rockets have multiple inertial measurement units (IMUs), redundant avionics power feeds, backup communications links, etc. The software cross-checks sensor readings against each other and against expected physics to reject spurious data. If one GPS unit outputs nonsense, the navigation software will detect the inconsistency and disregard that source. This requires careful coding – lots of validation logic and fallback scenarios, effectively encoding engineering judgment into the software. SpaceX engineers approach this systematically by defensive programming: assume any given input could be wrong and verify it. They also ensure that if something does go wrong, the software knows how to fail safe. For instance, if a serious fault is detected, the system might trigger an abort (as with Dragon’s launch escape system) or safely shut down certain systems. A vivid demonstration came during an unmanned Dragon cargo mission in 2012: a Dragon’s Draco thrusters failed to initialize after separation due to stuck valves. The software immediately halted the vehicle’s approach to the ISS (safety first) and went into a passive safe mode. SpaceX remotely diagnosed the issue and uploaded a software workaround to cycle the valves, restoring full function and saving the mission. Such in-flight reconfiguration capability is a hallmark of SpaceX software – flexibility to handle the unexpected.

Even in the Starship program, which is at the cutting edge, the same reliability principles apply. Starship prototypes have experienced rapid unscheduled disassemblies (RUDs), but often the causes were structural or propulsion issues rather than software. In fact, the software has successfully controlled Starships through maneuvers like the dramatic belly-flop descent and flip maneuver on high-altitude flights SN8-SN15 in 2020–2021. Those flights ended in explosions mostly due to engine and fuel system problems, but the guidance software proved itself by controlling the vehicle aerodynamically, transitioning between engines, and (in SN15’s case) executing a soft landing. Each iteration’s data fed into software tweaks, e.g. tuning the control algorithms for the flaps or adjusting how it schedules engine relights. By the time of Starship’s more recent flights, the software was managing a smooth booster-stage separation, long-duration burns, and even some engine-out compensation on the booster. For example, if a Starship booster engine underperforms, the system can throttle up others to maintain trajectory, similar to Falcon 9’s strategy. The redundant engine count (33) plus smart software is meant to ensure the mission can continue despite failures. SpaceX also added redundancy in other areas: after an earlier Starship test, they increased the number of attitude control thrusters and made software changes to improve control authority during ascent. The quick evolution of Starship’s software, from solving a methane header tank pressure issue that caused a crash, to refining the timing of stage separation, to adjusting the Flight Termination System logic, shows SpaceX’s responsive engineering. In essence, they treat each test flight like a high-energy integration test: if something goes wrong, add more safeguards in code or hardware and try again. This rapid loop is only feasible because the team is small and empowered to push fixes without a protracted bureaucracy.

Lastly, it’s worth noting that SpaceX’s software reliability isn’t just about onboard code. Their ground software and operations are also streamlined. Countdown and launch control software (which interfaces with pad systems, propellant loading, etc.) is automated to the point that a Falcon 9 countdown can proceed with minimal human intervention until a final “go” click. This ground software is built in-house (in one case, an engineer revealed a system called “WarpDrive” used for manifest and launch operations) and follows the same philosophy of rapid iteration and reuse. The overall software ecosystem, from manufacturing, to launch, to flight, is integrated. SpaceX can track a piece of hardware from the factory floor to flight via software, allowing quick identification of anomalies. For example, if a sensor starts acting up during a test, they can trace its serial number and see all data associated with its history. This integration further contributes to reliability by catching issues early and enabling swift troubleshooting.

Case Studies: Software in Action

To illustrate how these practices come together, let’s look at a couple of notable real-world instances involving SpaceX software:

Falcon 9 Engine-Out Anomaly (March 2020): During a Starlink launch, a Falcon 9 booster suffered an engine failure seconds after liftoff. In a traditional launch this might spell disaster, but Falcon 9’s software barely skipped a beat. It instantly detected the drop in thrust on one of the nine engines and rebalanced the load to the others, burning them slightly longer to make up the difference. The rocket achieved orbit precisely as planned, deploying all 60 satellites. This was not luck but design: engineers had simulated engine-outs and coded the guidance to handle it. SpaceX’s post-flight analysis confirmed the engine shut down due to a benign defect (a clogged relief valve), but because the software was robust, the mission succeeded regardless. The only impact was the booster missing its drone ship landing due to the altered flight profile. This event showcased the payoff of building resiliency, both in hardware (extra engines) and software (fault-tolerant control). It’s a dramatic example of software turning a potential failure into a success, reinforcing confidence for future crewed flights that the system can handle surprises.

Starlink On-Orbit Upgrades: Operating the Starlink constellation provides a unique playground for software innovation. SpaceX can iterate on satellite software even after deployment – something traditional single-satellite missions cannot risk. For instance, when introducing a major new networking feature, instead of updating all satellites at once (which could be catastrophic if a bug slipped through), SpaceX uses a canary approach: update a small number of satellites and observe. In one discussion, SpaceX engineers described how they might enable a new inter-satellite communication protocol on just one satellite initially, run regression tests on the ground to ensure critical functions (like basic comms) aren’t broken, then monitor that satellite in orbit as it operates with the new feature. Telemetry and performance metrics are compared against expectations. Once they are confident, the update is rolled out gradually to the rest of the constellation. They can even revert the software on a satellite if needed. This agility was demonstrated when SpaceX rapidly updated hundreds of satellites to respond to spectrum tuning or to mitigate brightness (for astronomers’ concerns) by changing how satellites orient themselves. The speed at which SpaceX deploys such changes, often in days or weeks, stands in stark contrast to the years-long software update cycles of traditional satellites. It highlights how treating satellites more like “cloud servers in space” (with frequent software deployments) can revolutionize space operations. Of course, this is feasible only because the groundwork was laid with intensive testing, simulation of orbital scenarios, and an operations team that can handle quickly changing configurations. The result is a constellation whose capabilities have improved over time (for example, increasing throughput and adding laser inter-satellite links in newer versions) without requiring new hardware for each improvement, only new code.

Crew Dragon In-Flight Reliability: SpaceX’s software has also proven itself in critical crewed situations. During the Crew-2 mission in 2021, the crew reported a slight issue with the cabin atmosphere, the Dragon’s alert system indicated a malfunction in the waste management (toilet) fan. While not life-threatening, it illustrated how Dragon’s autonomous systems handle off-nominal events. The software detected abnormal conditions and alerted the crew and ground. SpaceX’s mission control was able to diagnose the problem (a mechanical issue) and send commands to mitigate it, all while the capsule’s primary life-support and navigation software continued nominally. In another instance, Crew Dragon demonstrated resilience in communications. During parts of reentry, communications blackout is expected, but Dragon’s software is built to be fully autonomous during these periods. It doesn’t need ground input to deploy parachutes or manage reentry attitude, it’s all on autopilot, with triple-redundant checks to ensure actions occur at the right altitude and speed. The successful automated docking of Dragons to ISS (now routine) is another case study: the guidance software uses computer vision and LIDAR to align with the station, and it has to be extremely reliable (with fail-safes to abort if targets deviate). In 2020, during early tests, SpaceX practiced many autonomous approaches and retreats to fine-tune this system. The fact that since then every docking has been smooth is a testament to that rigorous upfront simulation and the maturity of the software. No crew spacecraft has ever been so autonomous, and it’s largely due to the confidence that software will handle things correctly. NASA’s trust here was hard-won, SpaceX had to demonstrate through thousands of tests (simulated ISS docking runs, etc.) that Dragon’s code met the highest safety bars. This illustrates how SpaceX can be “fast” but also thorough when human life is involved, using its methodologies to achieve reliability faster rather than skimping on it.

Starship Rapid Iteration Post-Flight: After Starship’s first full-stack test in 2023 ended in an early termination, SpaceX compiled a huge list of improvements, Musk noted nearly “hundreds of changes” including many software tweaks. For example, the timing for stage separation and engine ignition was adjusted in software to ensure a clean break; the flight termination logic (which took slightly long to rupture the vehicle) was refined for quicker response; and the guidance algorithm was tuned to handle pad debris damage (the first launch shattered the concrete pad, which disrupted some sensors). By the next flight attempts in 2024, these issues were largely solved: Starship flight 4 reached space, and flight 5 performed full mission profiles. After flight 4’s partial success, SpaceX again iterated, improving heat shield software thresholds and the flip maneuver timing. This rapid software iteration between flights, often just a few months apart, is unheard of for rockets of this scale. It shows how SpaceX leverages its test data: each flight is instrumented to the hilt, recording every parameter. As soon as possible after a test, engineers pore over the data, update simulation models to match what happened, adjust code or parameters, run the new simulations to verify the fix, and deploy it for the next flight. It’s effectively a software sprint between launches. By flight 5, the Starship system was robust enough to perform a flawless booster return and catch. As SpaceX moves toward making Starship orbital and reusable, this software-driven iteration loop will continue to be critical – catching subtle bugs and improving performance with every launch.

Conclusion

SpaceX’s journey from a scrappy startup to the forefront of space exploration has been fueled not only by rocket hardware innovations but also by software excellence. The latest developments – Starship’s monumental flights, the massive Starlink network, and Dragon’s continued success, all underscore a truth: software is the invisible rocket fuel that has powered SpaceX’s rapid rise. By adopting Silicon Valley-style agile development, continuous integration, and a fail-fast mentality, SpaceX’s software team delivers features and fixes at a pace traditional aerospace would have thought impossible. Crucially, they’ve shown that speed and safety are not mutually exclusive: with the right processes (automated testing, modular architecture, redundancy), a small team can outperform giant organizations while maintaining reliability. SpaceX’s lean DevOps approach yields real competitive advantages – faster design cycles, lower costs, and the ability to adapt quickly to new goals or issues. This has enabled feats like landing and reusing boosters (with software guiding sub-meter precision), deploying new spacecraft yearly, and updating on-orbit systems like one updates a smartphone app.

For software developers and engineering teams, SpaceX stands as an inspiration for what a high-performance culture can achieve. They demonstrate the power of integrating development and operations, of investing in your testing infrastructure, and of never being afraid to iterate. As we head further into the 2020s, SpaceX’s software will be tasked with even more, from fully autonomous Mars landings to perhaps managing dozens of Starships in a day. If the past is any guide, the team will continue to push the envelope of DevOps in space, setting new benchmarks for both reliability and velocity. In Elon Musk’s vision to make humans multi-planetary, the rockets will get the glory, but it’s the code inside them, crafted with care, tested in every way imaginable, and deployed with daring frequency, that will make it all possible.